Ragi organizes algorithmic bug detection on the model of bug-detection campaigns that mobilize hackers. As it is known, big companies like Google, Facebook or Microsoft all tend to regulate a procedure in which they pay a “ransom” to the finder of previously unknown bugs, thus enriching the knowledge of the company and the malware developers.

The first bug search was organized by Netscape in 1995. Last year, Google paid a total of $ 6.7 million to 662 security professionals who discovered vulnerabilities.

When you release a piece of software that may have a vulnerability that makes it vulnerable, the information security community can track these errors through a variety of means. Problems arising in algorithmic bias run parallel to this

Raji told Zdnet.

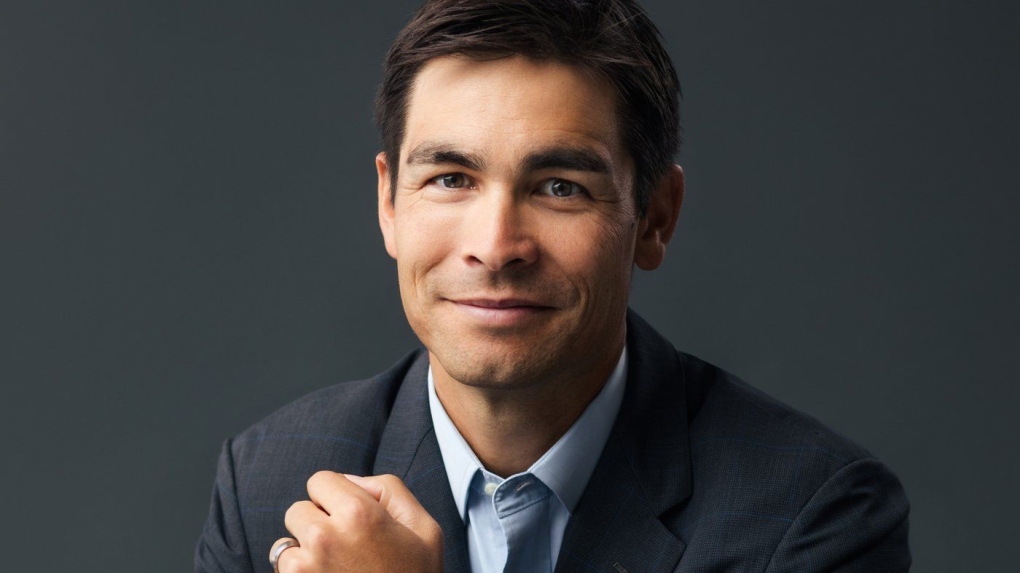

The Nigerian-Canadian researcher is organizing campaigns called CRASH (Algorithmic Damage Community Reporting) to search for errors in AI-based applications.

Firms won’t like him

MI systems are appearing in more and more apps, but as of now, there is no effective way to properly test them running. So far explicit or hurtful errors have been found by independent experts. This was the case when employees of MIT and Stanford University found that commercial facial recognition programs were biased based on skin tone.

Photo: Rajiinio, CC BY-SA 4.0 / Wikipedia

But the problem, Raggie’s team realized, is that finding biases in algorithms is more difficult than finding an attack surface in some programs.

One thing is to define in an algorithm what we consider malicious behavior and to create a whole methodology for detecting it. The other problem that affects the region more directly is that the companies care about the opposition, so they are not as happy as a bug that was not used against them.

For example, they encountered hostile behavior specifically regarding Rekognition’s face recognition issues on Amazon. So Ragi will not entrust corporate self-regulation with ethical AI and believes compliance with social norms is possible either through strong public action or through laws.